How safe, unbiased, and beneficial for society is AI?

This question bothers many decision-makers in technologies, businesses, and societal norms development and improvement.

AI technologies are a vital part of our lives; thus, the ethical considerations associated with their use become increasingly important. That’s especially true as AI systems become more autonomous and are used in applications playing significant roles for individuals and the entire society.

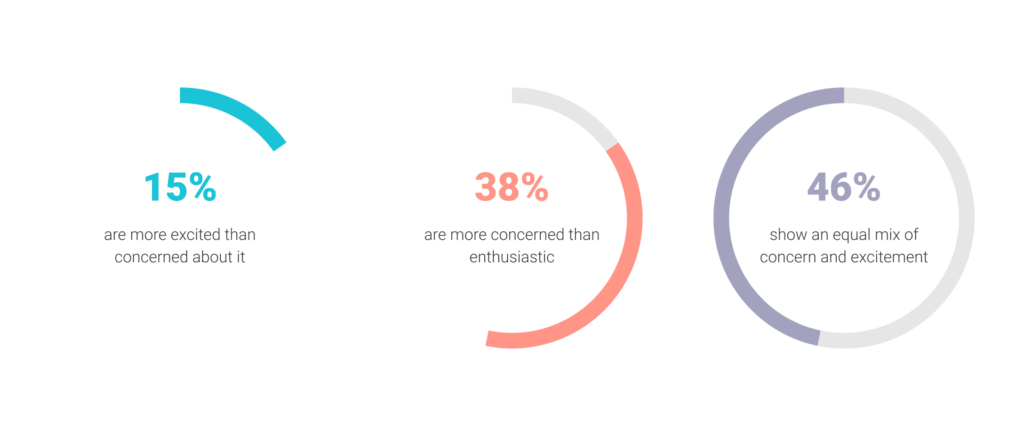

For instance, a recent investigation by Pew Research Center has revealed quite a conservative attitude to increasing AI usage in daily life among Americans:

- 15% say they are more excited than concerned about it,

- Over 38% are more concerned than enthusiastic;

- More than 46% show an equal mix of concern and excitement.

And this is just the tip of the iceberg. The roots of this concern are much deeper, and there’s so much work to be done to ensure that AI is developed and used in a way that’s responsible, transparent, and aligned with societal values and needs.

This article focuses on the definition of ethics in AI, what elements it includes, and explains why it’s still a concern. Also, we’ll highlight how the most prominent organizations and corporations are tackling this issue and will try to define the steps for the companies and societies to make it work better.

What Makes it an Issue?

So, why are we talking about it, first of all?

Artificial intelligence has rapidly become a transformative technology that improves operations, services, and products. However, its increasing usage has raised ethical concerns about the potential negative impacts of these systems on society. Consequently, businesses need to consider the moral sense of AI, not just for the sake of ethical behavior but to avoid different sorts of risks. And here’s why.

Privacy and security concerns: AI systems can collect and process large amounts of personal data, raising concerns about privacy and security. If companies don’t handle this data responsibly, it can breach the trust of customers and stakeholders.

Lack of transparency: Some AI-empowered approaches are complex and challenging to understand, making it difficult for businesses to explain how they make decisions. This can lead to a need for more transparency and accountability in decision-making processes.

Bias and discrimination: AI-enabled answers can convey biases and discrimination that exist in society. Businesses using these systems in decision-making processes can trigger unfair outcomes for both individuals and whole groups.

Legal and regulatory compliance: Businesses must comply with various legal and regulatory requirements when using AI systems. Otherwise, they can face legal and regulatory consequences.

Reputation and brand damage: If a company uses AI solutions in a way considered harmful, it can damage its reputation and brand. This can result in losing customers, revenue, and market share.

Impact on employment: AI-enabled systems can automate specific tasks and functions, leading to job displacement and unemployment. If businesses don’t consider the ethical side of these impacts, it can result in negative consequences for their employees and the entire society.

The Fundamentals: What Ethics in AI is and Its Key Elements

Let’s cover the basics before diving deeper into this problem’s solution.

So, what does it all mean?

Ethics in AI refers to the principles and standards that guide the development, use, and deployment of artificial intelligence technologies. It involves considering the moral senses of AI and ensuring that these technologies are used with all responsibility, fairly and transparently, and don’t cause harm to individuals or society.

Generally, developers and businesses must ensure that AI systems don’t confront certain groups of people using biased data or algorithms. They also need to be transparent about how these systems work and ensure their accountability for any adverse consequences arising from their usage.

So, it’s all about ensuring that AI technologies are developed and used in a way that aligns with moral values and principles and doesn’t cause any harm or violate any human rights.

Core Aspects of Ethics in AI

Several key points are essential for ethics in AI:

- Transparency: AI systems should be designed and developed transparently so that users, stakeholders, and other interested parties can understand their operations. This includes clearly explaining how the AI solution makes decisions and what data they use for them.

- Fairness: AI-enabled solutions should not discriminate against individuals or groups based on race, gender, age, or any other characteristics. Developers must train their systems on unbiased data and take measures to mitigate any potential biases.

- Accountability: Developers must take responsibility for the decisions made by their AI scenarios. They should establish mechanisms to monitor the system’s performance, ensure it operates within ethical boundaries, and address any issues.

- Privacy: AI systems should respect individuals’ privacy and protect their personal data from unauthorized access. Developers must take measures to protect user data and comply with relevant privacy regulations.

- Safety: AI-empowered approaches should be designed and developed with safety in mind. This includes ensuring the system operates within established safety limits, preventing any harm to people or the environment, and implementing appropriate mechanisms.

- Human oversight: AI solutions should undergo human supervision and control. Developers must ensure businesses and users understand the workflow and moderate the system’s decision-making process when necessary.

Does Society Take any Steps?

There has been a growing recognition of the importance of ethical considerations in AI in recent years. As a result, many companies, policymakers, and civil society organizations are working to promote ethical practices in AI development and use.

Organizations and Institutes

Many organizations are working to promote ethics in AI. Each has a unique focus and approach, but all share the goal of fostering responsible and ethical development and use of AI. Here are just a few prominent programs that advance and nurture ethical AI practices:

- Partnership on AI: This consortium of tech companies, civil society organizations, and academics aims to promote AI’s responsible development and use. The Partnership on AI’s activities includes research, development of best practices, and engagement with policymakers and the public.

- IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems: The program aims to advance the development and use of AI ethically and responsibly. The program includes the development of standards and guidelines, engaging with stakeholders, and promoting education and awareness.

- AI Now Institute: This research institute focuses on the social implications of AI. Their work includes research on bias and discrimination, labor and automation, and transparency and accountability.

- Centre for the Governance of AI: The research center concentrates on the governance and regulation of AI. Their work possesses research on accountability, transparency, and public policy issues.

- Future of Life Institute: The organization is focused on ensuring that the development of AI is in line with human values. Their activities include research on safety, transparency, and ethical decision-making issues.

- Global AI Ethics Consortium: This network of organizations promotes ethical AI practices. Their activities include research, best practices development, and stakeholder engagement.

- The Institute for Ethical AI and Machine Learning: The non-profit organization also aims to promote ethical and responsible AI practices. They serve for research, development of best practices, and education and awareness-raising activities.

Tech Mammoths and Their Approaches to Ethics in AI

Many giant corporations that work with AI also have taken steps to acknowledge and address ethical concerns related to these technologies. The following are just 7 of them:

- Google’s AI Principles: Google has outlined a set of AI principles that guide the company’s development and use of AI. The principles include a commitment to transparency, fairness, and accountability, among other things. Google has also established an external advisory council to guide on AI issues.

- Microsoft’s AI for Good Program: The program provides grants and resources to organizations that use AI for social good. The program focuses on ethical and responsible AI practices, and Microsoft has also developed an AI ethics checklist to guide the development and deployment of AI systems.

- IBM’s AI Ethics Board: IBM has established an AI Ethics Board to guide the company’s development and deployment of AI systems. The board includes ethics, policy, and technology experts and advises on transparency, accountability, and fairness issues.

- Amazon’s Responsible AI: Amazon has developed a set of principles that guide the company’s development and deployment of AI systems. The regulations include a commitment to transparency, fairness, and accountability, among other things. The company has also established a machine learning fairness team to address issues related to bias and discrimination in AI systems.

- Intel’s AI for Social Good: Intel has established an AI for Social Good program that provides resources and support to organizations working on social impact projects. The program focuses on ethical and responsible AI practices, and Intel has also developed an AI ethics framework to guide the development and deployment of AI systems.

- Facebook’s Responsible AI: Facebook has built a set of principles that guide the company’s development and deployment of AI systems. These include a commitment to transparency, fairness, and accountability, among other things. Facebook has also established a Responsible AI team to address issues related to bias, discrimination, and other ethical concerns.

- NVIDIA’s AI Principles: NVIDIA has published a set of principles that guide their policy towards further AI elaboration from the company. The regulations include a commitment to transparency, safety, and privacy, among other things. NVIDIA has also established an AI ethics committee to guide the development and deployment of AI systems.

What Happens Next?

Promoting ethics in AI for society and businesses requires a multifaceted approach that involves stakeholders from a wide range of backgrounds and perspectives. Here are a few suggestions on what companies, researchers, and institutions can do to promote ethics in AI:

- Education and awareness: Education and awareness-raising activities can help to promote an understanding of the ethical implications of AI among businesses, policymakers, and the general public. These moves can incorporate training programs, public lectures, and awareness campaigns that highlight the ethical considerations related to AI.

- Stakeholder engagement: Engaging a diverse range of stakeholders, including AI developers, civil society organizations, and affected communities, can help to promote ethical practices in AI development and use. Such activities may include consultation processes, participatory design workshops, and other collaborative approaches.

- Policy and regulation: Developing policies and regulations related to AI development and use can ensure that ethical considerations are incorporated into AI practices. This can include standards and guidelines, certification schemes, and legal frameworks that promote responsible and ethical AI practices.

- Ethical design and development: Companies and developers should integrate ethical considerations into the design and development of AI systems from the outset. Such approaches may consider privacy, bias, transparency, and accountability issues in developing AI systems.

- Independent auditing and oversight: Such mechanisms can help ensure that AI systems are developed and used ethically and responsibly. This can include third-party audits, regulatory management, and industry self-regulation mechanisms.

As we see, ethics in AI remains an important issue that requires ongoing attention and action from a wide range of stakeholders, and its promotion needs a comprehensive approach that involves a lot of aspects.

We at Unidatalab always align with these concepts above, serving security, privacy, and goodwill to your business.

Ready to discuss a new AI project? Then let us know!