There hasn’t been a day that we didn’t come across another piece of content about how stunning Chat GPT is since it was released.

And the buzz around it is pretty justified; otherwise, giant companies like Expedia, Microsoft, Duolingo, and others wouldn’t have integrated it into their workflows. Surely enough, the Chat GPT use cases and its range of applications are genuinely diverse. However, there are still limitations to work on, which might fade away its brightness.

On the other hand, there are other Large Language Models (LLMs) like Vicuna and Alpaca, which we already told you about. They showed great results; however, there are no licenses for their commercial usage, which doesn’t allow you to integrate them into your business.

What if we were to tell you that you can take the best from the 2 worlds? Rapidity and human-like behavior from ChatGPT – and smooth customization and security from others, open-source but commercial-licensed LLMs?

As you might already know, we at UnidataLab love playing around with LLMs, getting them a Coursera certificate, and many other things. This time, we picked 2 open-source models (Pythia-12b and GPT-J) with the commercial license. We compared their performance against Chat GPT across 3 tasks: rephrasing, anonymization, and sentiment analysis. The article describes our experiments and outcomes and gives recommendations on how to leverage them.

The experimental part

Before we start, we’d like to give you some details about the models.

A few words on the models

Pythia 12b results from refining EleutherAI/Pythia-12b-deduped using the Dahoas/synthetic-instruct-gptj-pairwise dataset.

To train the model, the core developers divided the original dataset into two subsets: the training set, consisting of the first 32,000 examples, and the validation set, containing the remaining 1,144 examples.

Utilizing deepspeed, the engineers conducted a four-epoch fine-tuning process that spanned 17 hours on 8xA100 80GB GPUs. For each GPU, the batch size was set to 4, resulting in a global batch size of 32.

Additionally, they employed a learning rate of 0.0000025, which linearly decayed to zero during the final training step.

GPT-J, which operates under the Apache-2 license, has undergone extensive training using a diverse collection of assistant interactions encompassing word problems, multi-turn dialogues, code snippets, poems, songs, and narratives.

Engineers incorporated Dolly and ShareGPT into the v1.2 dataset for research v1.3-groovy to enhance its capabilities.

Above that, they employed Atlas to identify and eliminate approximately 8% of the redundant data within the v1.2 dataset, ensuring greater efficiency and uniqueness.

Rephrasing

After testing Pythia 12b(GPT-NeoX), GPT-J, and Chat GPT to rephrase sentences on 50 samples (1-3 sentences each), we received the following results:

Pythia 12b (if prompt: “Rephrase this sentence:” + sentence)

- Correctly rephrased: 32

- Minimal changes: 7

- Concatenating sentences: 7

- Incorrect meaning: 17

- No changes: 1

GPT-J (if prompt: “Rephrase this sentence:” + sentence)

- Correctly rephrased: 20

- Incorrect meaning: 30

GPT-J (if prompt: “Reword this sentence:” + sentence)

- Correctly rephrased: 22

- Incorrect meaning: 27

- No changes: 1

Chat GPT

- Correctly rephrased: 47

- Incorrect meaning: 3

Here are some examples of rephrasing sentences.

Example 1

Original:

The wind howled outside, rattling the windows. The scent of freshly baked bread filled the air.

Pythia 12b:

The howling wind outside, rattle the windows, filled with the scent of freshly baked bread.

GPT-J:

The wind whistled fiercely outside, making the windows rattle. The aroma of freshly baked bread wafted through the room, filling the air.

Chat GPT:

While the wind was howling outside and rattling the windows, the fragrance of freshly baked bread could be detected in the air.

Example 2

Original:

The rain pattered against the roof, lulling her to sleep.

Pythia 12b:

The pattering rain against the roof lulled her to sleep.

GPT-J:

The rain tapped gently on the roof, soothing her into slumber.

Chat GPT:

She was lulled to sleep by the sound of the rain pattering on the roof.

Example 3

Original:

He gazed up at the stars and felt small in the vastness of the universe.

Pythia 12b:

He felt small in the vastness of the universe, gazing up at the stars.

GPT-J:

He looked up at the stars and marveled at how they seemed so tiny compared to the vastness of space.

Chat GPT:

As he looked up at the stars, he was overwhelmed by how minuscule he felt amidst the enormity of the cosmos.

Summary: Chat GPT showed the best results, Pythia 12b came 2nd, and GPT-J took 3rd Place in accuracy.

Anonymization

We also tried out these models for sentence anonymization, and here are the outcomes.

Example 1

Original:

Emily Brown donated $1,000 to the local animal shelter.

Pythia 12b:

Emily Brown donated [MONEY] to the local animal shelter.

GPT-J:

Emily Brown donated $1,000 to the local animal shelter.

Chat GPT:

[PERSON] (Emily Brown) donated [MONEY] ($1,000) to the [LOCATION] (local) animal shelter.

Example 2

Original:

Jane’s phone number is 555-1234.

Pythia 12b:

Jane’s phone number is [NUMBER].

GPT-J:

Jane’s phone number is 555-1234.

Chat GPT:

[PERSON] can be contacted at [PHONE] (555-1234).

Example 3

Original:

Samantha is currently undergoing treatment for a rare medical condition at St. Mary’s Hospital.

Pythia 12b:

Samantha is currently undergoing treatment for a rare medical condition at St. Mary’s Hospital, located in [NUMBER], [MONEY].

GPT-J:

Samantha is currently undergoing treatment for a rare medical condition at St. Mary’s Hospital.

Chat GPT:

[PERSON] is receiving medical care for a rare condition at [LOCATION] hospital.

Summary: The results are the same as in the previous task, meaning Chat GPT introduces the highest accuracy, Pythia 12b is the next, and GPT-J closes the circle in the 3rd place.

Sentiment analysis

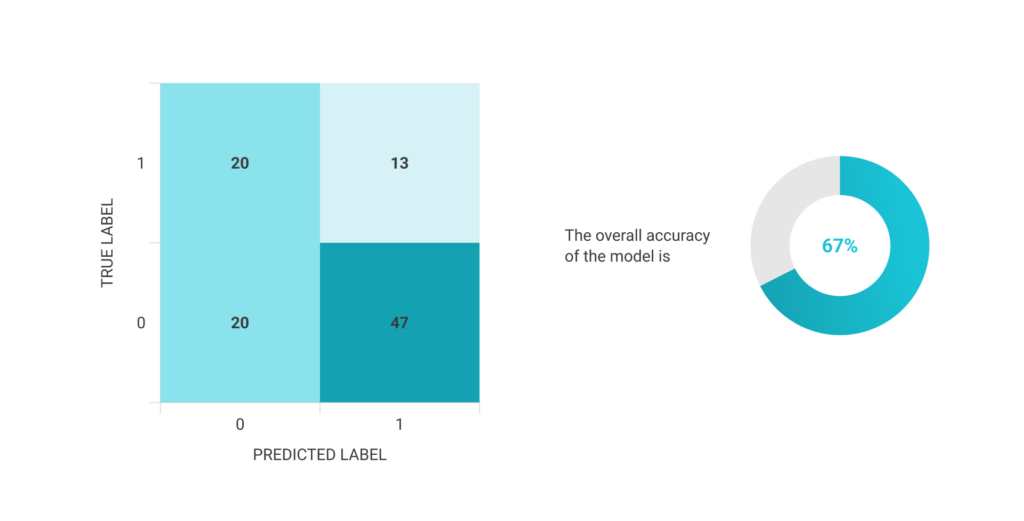

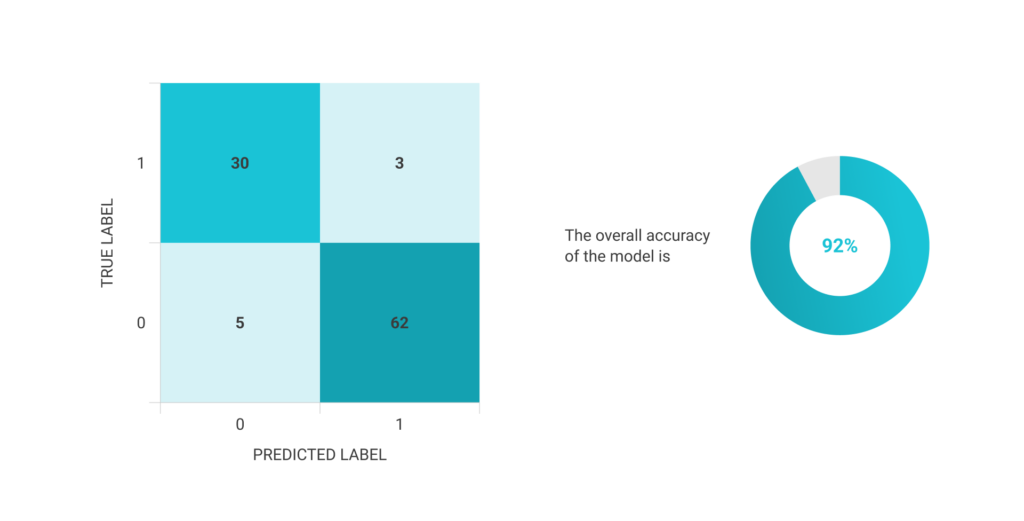

We used the IMDB Movie Ratings Sentiment Analysis table, which contains of 100 movie ratings (67-positive, 33-negative, the formatting is kept original). In this task, models should write 0 if a rating is negative and 1 if it’s positive. We also built confusion matrixes describing how often a model matched the true rating label.

GPT-J

Here are some examples of how GPT-J works:

The following are the confusion matrix and overall accuracy for GPT-J:

Chat GPT

Here are some examples of how Chat GPT works:

Look at the confusion matrix and overall accuracy for ChatGPT below:

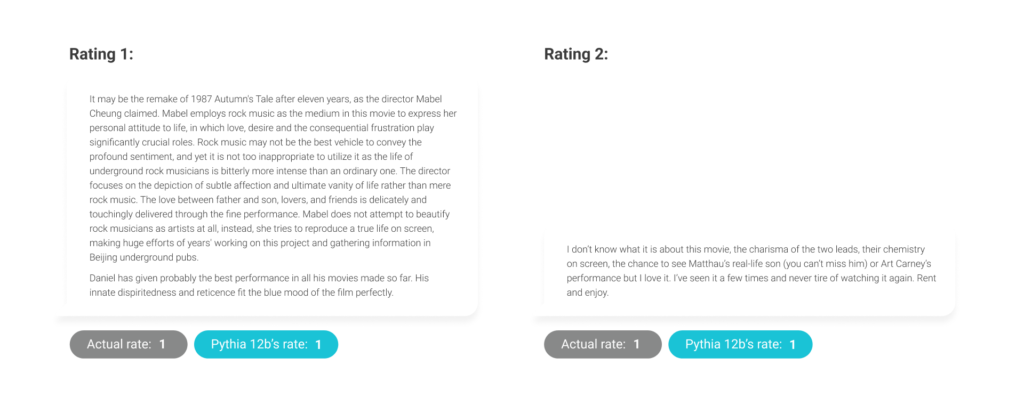

Pythia 12b

Here is the sentiment analysis with Pythia 12b:

Overall accuracy on 67 positive rates is 60%.

For Pythia 12b, we couldn’t build a confusion matrix because of the lack of negative reviews due to the earlier-mentioned server error.

Summary: This time, results are slightly different: Chat GPT is 1st as usual; however, GPT-J is in 2nd place, and Pythia 12b* comes 3rd.

*It’s an approximate comparison because, with Pythia 12b, we run tests only with positive rates.

A few words to conclude

Yes, GPT-J and Pythia-12b don’t show such high results as ChatGPT. But their most significant advantage is that you can integrate them into your internal workflow and fine-tune them for your needs.

And this is what you get:

The most relevant outputs. As this is not just the generic processing of information but the exchange of data with a continuously updated company database, the given models ensure only the latest and up-to-date replies while the ChatGPT only has information till 2021 and can provide you with generic answers

Security. The data from databases are not flowing to OpenAI, residing only in the company’s infrastructure, which ensures business data integrity.

Automized prompt-engineering. The models are self-trained, so the developers won’t need to put enormous effort into teaching them.

As an outcome, you obtain the super-customized Chat GPT-like solution with higher security and less finetuning headache.

We at UnidataLab have been training such models recently. Want to learn more? Drop us a line!