E-commerce conversational AI (consulting bot)

The purpose of conversational AI is to secure communication on a certain topic between one person (user) and another (sales manager, customer support agent) when the latter, for certain reasons, don`t have an opportunity to participate. Such systems are used in online banking, e-commerce, law, business consulting, healthcare, etc. For example, conversational AI can help to create an order in a cafe, issue a loan, advise on juridical issues, and all this is provided in the form of a conversation without the participation of a leading (human) specialist. The advantage of such systems is 24/7 support/service without the need to maintain an entire staff of managers, which significantly saves time and costs. The use of conversational AI in e-commerce is the most common and, at the same time, the simplest. The requirements for such an AI system are not too high compared to the same in healthcare or legal domains, where clarity and accuracy are of extreme importance, and the decisions of the AI can lead to serious consequences. AI in e-commerce is usually used to order a product/service, advise the client on the item specification, provide support for backorder, and show the highest efficiency where a person acts according to a specific business flow.

Our client is a European company that specializes in e-commerce sales. They offer a marketplace that includes the major manufacturers' products and lines of their products. Since their network is constantly growing and the new manufacturers are joining, a problem related to customer support has risen. It is more difficult for the user to make a choice from multiple similar offers differing both in characteristics and price. The demand for online consulting has increased, but there are not enough managers who can support them. Thus, the company needed to develop a system solving their support issues and stop losing potential clients.

Solution

Consult users by conversational AI that is developed to partially perform a sales manager's functions and provides detailed information about a specific product upon request.

How it works

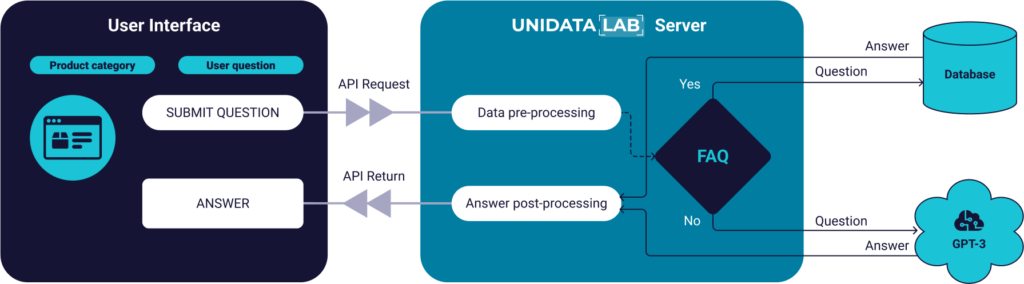

The system works based on the GPT-3 model. A generative Pre-trained transformer (GPT) is an autoregressive language model that uses deep learning to generate text of human-level quality. In this case, a third-generation model is used, created to answer questions based on the provided context information. A question and an item description (info about a product from the web page) are submitted as input of the model so that the system can understand the essence of the question and generate appropriate answers. Requests to the GPT server come from the Unidatalab server, which is used to collect the necessary information (context, question, dialogue history) from the front-end and knowledge base, preprocess this information, send it to the GPT, receive the result from the GPT, post-process it, and send the user response to the front-end. At the preprocessing stage, verification is done on whether the system has previously received similar questions about this product. If so, the system does not refer to the GPT server but to the database where the answer to this question is located. This is done to reduce the number of requests to the GPT and to save money for our client. The statistical evaluation of the requests determines the list of questions to be added to the database. If the number of requests with the similar questions regarding one product exceeds a certain limit, such a question is considered as frequently used and is added to the database. In the post-processing step, we check whether the GPT response is empty. If so, we replace it with a special phrase so that the user understands that the system does not have an answer and that the user needs to contact the assistant.

The users select the product they are interested in and ask the question to be clarified in the corresponding UI field.

The front-end part combines this question with the context (related to the product) and sends the request to the server using the API.

The server checks whether the given question is in the FAQ database. If this question is in the FAQ list the answer is taken from the database [no request to the GPT server].

If the question is not in the FAQ list, then the phrase with the context is sent to the GPT server, it is processed there, and the server returns the answer.

If the GPT server returns an empty answer, it is replaced with a specially composed answer to show that the system does not have a reply to the question and the user needs the manager’s help.

After that, the server returns a response to the user.

Our challenges:

Prepare phrases for testing

To test the conversational AI, a dataset should be prepared. Our team decided that the AI should be tested on e-commerce questions, as it will usually answer them. Therefore, we created a dataset of questions taken from the Best Buy online store and tested the AI on them.

Long and unclear answer

The answers the AI gave were too long and thus not meaningful (inconvenient for the client). Therefore, our AI team calibrated GPT to make the system respond in a user-friendly way.

Number of categories

Since the client’s product assortment is very large, we suggested choosing a few of the most popular to reduce the AI implementation time, get the user feedback, make amendments, and then scale the bot to other product lines. It was necessary to choose the key product categories. Since the client was uncertain about which to choose, we analyzed the users’ requests and compiled the corresponding list.

Caching responses

Since requests to the GPT server are billable, and the number of requests is very large, it was not profitable for the client. A caching logic to solve this problem was offered to the client. The most frequently used questions were stored in the database. And if a similar question arose again, it was not sent to the GPT server, but the answer was taken from the database. Thus, the number of requests to the GPT server decreased.

The scope of the description

Due to the limitations of GPT-3, regarding the size of the transmitted context, it was necessary to prepare a compact but comprehensive data format that concisely conveyed the information about the product. Our team offered several formats, and after a certain discussion with the client, the context format was finalized.

Project stages

To better understand the nature of the problem, our team held several meetings with the client, during which we agreed on further actions and the final design of our solution.

Our team studied the existing systems that could solve the client’s problem. It was necessary to test their capabilities. For this purpose, a dataset with real-world users’ questions was collected and used for system testing. According to the results, the GPT engine was chosen because its answers were easily understood, clear and accurate.

The team prepared the test and the research reports in which all the results and explanations of the decisions made were provided. The results were shared with the client. Afterwards, several meetings for coordination were held. Before implementing the AI solution, the software team agreed on an API contract convenient for the integration with the client’s pipeline.

Our AI team created an integration for GPT-3 transformer that accepted the user`s question and context related to a specific product, preprocessed it into a form suitable for the GPT transformer, and returned the GPT-generated answer back to the user.

The source code was sent to the client as a separate component with the corresponding documentation.