AI Dubbing: customized text-to-speech solution

The speech tempo correction is used in cases where it is necessary to align the tempo of two speech segments. Such a need arises in speech-to-speech translation projects. It requires simultaneous interpreting from one language to another, and at the same time, the translated phrase should be with the same tempo and duration as an original one. This helps to avoid speech desynchronization of video and audio when the speaker starts talking on the video, and the actual speech starts too early or too late. The speech tempo correction is invaluable in automatic dubbing tasks. Text-to-speech systems used as text converters for such purposes do not allow you to know the duration/tempo of the voiced phrase before the synthesis. Therefore, if you want to find the right tempo, you need to synthesize the phrase twice: the first time to find out the duration and the second to adjust the tempo. Using speech correction systems greatly simplifies the work of editors, as they will know if the text needs to be edited to meet certain requirements (tempo/duration) before synthesizing, and you don't need to spend money on additional text-to-speech requests.

Our client is a European start-up company that provides speech-to-speech translation services. Their application allows translating speech from one language to another and is used for automatic dubbing. From a technical standpoint, the existing system is a combination of a few separate web services. One of the core services is the text-to-speech engine (TTS). TTS gets text as input and returns audio with synthesizing speech. Our client needed to solve the problem of aligning the tempo of the original and translated speech. If the original text was translated from English into German, in some cases the German phrase sounds longer than the English one. And when the client tried to fit a longer phrase into the same time slot as in the original recording, the translated phrase sped up the tempo, and the phrase became unintelligible.

Solution

Correct the tempo of the translated speech by integrating into the client's pipeline a specialized component that predicts translated speech tempo and evaluates the duration difference between two corresponding speech segments.

How it works

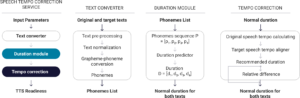

The service consists of several modules: Text-to-Phoneme Converter, Duration Predictor, and Tempo Corrector.

The algorithm works as follows:

The text-to-phoneme module converts both original and translated texts into a sequence of phonemes for each language.

Phonemes are used by the duration prediction AI model to estimate speech durations.

The tempo correction logic adjusts the duration of translated speech to align the tempo with the original recording.

The relative difference between original and translated speech is calculated and analyzed to make a recommendation if it can be safely used for dubbing or if some manual rephrasing of the translated text is required.

AI pipeline architecture

Our challenges:

The input of the duration module

It was important to choose the basis to determine the expected duration of the synthesized phrase. Options included words, symbols, phonemes, and syllables. We chose phonemes for durations prediction because their numbers correlate with a number of sounds. Words and symbols are not suitable for this solution because a phrase with the same number of symbols can have a different duration after synthesis in different languages. This leads to a significant error, especially for short phrases. And the use of syllables was rejected since converting the input text into syllables is more time-consuming.

Number of models

The speech duration service is expected to be language agnostic. Since the client is engaged in translation, this involves the use of a large number of languages. Our AI team decided to create AI models not for each language but for language groups to simplify the task of scaling the system in the future. Thus, Spanish, Portuguese, and Italian have a common model because they all belong to the Romance group of languages.

Punctuation symbols duration

For the tempo correction service to work correctly, it was necessary to consider the delay associated with punctuation since each symbol has a certain duration of silence, which affects the duration of the voiced phrase and, therefore, the tempo. Our team researched phrases of different lengths, languages, punctuation symbols, and their position in the sentence.

TTS number synthesizing

There was a problem with applying TTS logic to numbers and digits. Our team researched and identified that different numeric formats had several styles of voicing them. Therefore, our team offered the client to convert the numerical data format into the corresponding text format (e.g., 18.08.1996 – August eighteen, nineteen ninety-six) before using TTS.

Project stages

Our team made a few meetings with the client’s team to better understand the problem and prepare documents with AI pipeline architecture as well as a detailed explanation with our vision on how to solve the task.

At this stage, our AI team researched open source solutions for text-to-phonemes converter and phonemes durations predictions modules and prepared a summary of the accuracy of such systems.

We developed the API and corresponding AI logic which takes input parameters (texts, original duration, and acceptable difference) and returns a decision if the translated speech fits within the defined time boundaries.

The source code was sent to the client as a separate service with research results and corresponding technical documentation.